Google AI chips challenge Nvidia in inference race

Google is accelerating its push into artificial intelligence hardware, preparing new chips designed to speed up AI responses and challenge the dominance of Nvidia in one of the fastest-growing segments of the tech industry.

The move signals a strategic shift as the AI boom transitions from training large models to running them efficiently in real time—a process known as inference.

A shift toward faster AI results

Executives at Alphabet Inc. say demand is rapidly evolving.

“As demand grows for quickly processing AI queries, it now becomes sensible to specialize chips,” said Jeff Dean.

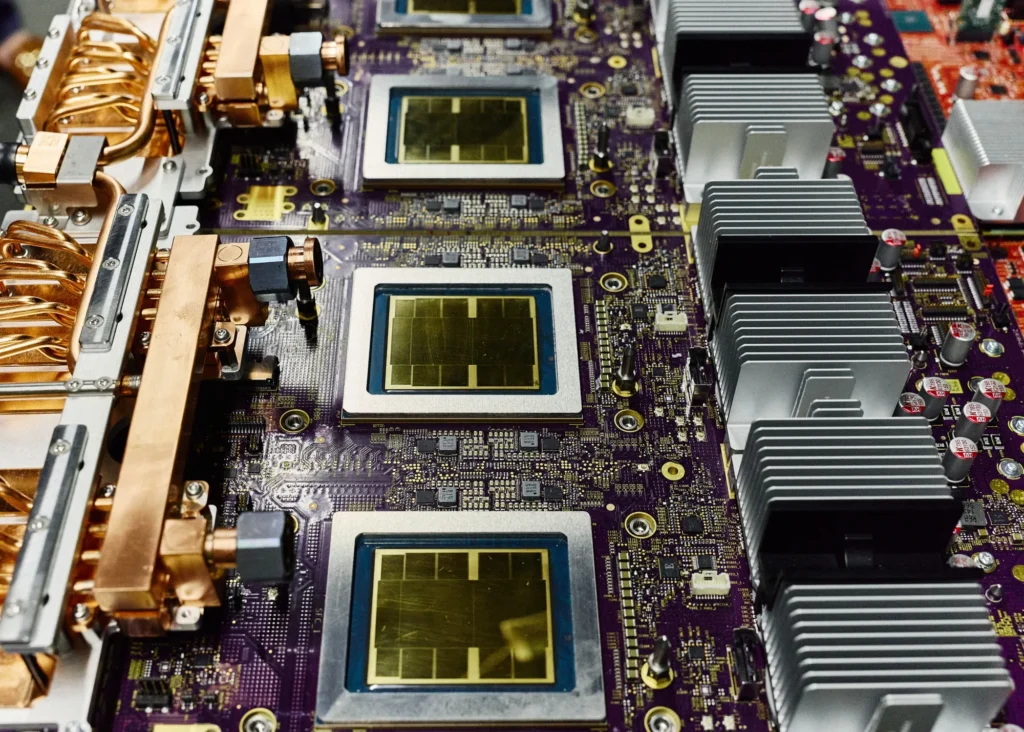

Google is expected to unveil its latest generation of tensor processing units (TPUs) at its Cloud Next conference, with a growing focus on chips optimized specifically for inference workloads—powering chatbots, AI agents and real-time applications.

Nvidia still leads — but pressure is building

Nvidia remains the industry standard, particularly for training advanced AI models using its GPUs.

But competition is intensifying.

A wave of new chips is targeting inference tasks, where speed and efficiency matter more than raw training power. Nvidia itself has moved into this space, launching new inference-focused hardware following a major technology deal.

Still, Google brings a unique advantage: it designs and deploys its own chips at scale.

That vertical integration allows tight coordination between hardware and AI models—something rivals are only beginning to replicate.

Big tech and AI labs rush to adopt TPUs

Interest in Google’s chips has surged across the industry.

Leading AI developers, including rivals, are increasingly adopting TPUs:

- Meta Platforms signed a multibillion-dollar agreement to use TPUs via Google Cloud

- Anthropic expanded access to as many as 1 million TPUs

- Financial firms and cloud providers are testing the chips for both training and inference

Even companies traditionally reliant on GPUs are now exploring hybrid approaches.

“A lot of people would like to run on both,” said Demis Hassabis.

Why inference is the new battleground

The AI market is entering a new phase.

While early investment focused on training massive models, the next wave centers on deploying them efficiently at scale—serving millions of user queries instantly.

That shift is reshaping chip design priorities:

- Lower latency (faster responses)

- Reduced costs per query

- Energy efficiency at scale

Analysts say this transition plays to Google’s strengths.

“The battleground is shifting toward inference,” said industry analyst Chirag Dekate. “Google has an infrastructure advantage.”

A decade-long bet paying off

Google’s chip ambitions are not new.

The company began developing TPUs more than a decade ago to handle internal workloads like search, translation and voice recognition—tasks that required massive computational power.

At the time, building specialized hardware was considered risky.

Today, it looks prescient.

Google’s chips now power its flagship AI systems, including its Gemini models, and are increasingly offered to external customers through its cloud platform.

Challenges ahead: supply and strategy

Despite its momentum, Google faces hurdles.

Like Nvidia, it must manage supply constraints as demand surges. Some companies report limited access to TPUs, with priority given to top-tier AI labs.

There’s also a strategic balancing act:

- Allocate chips to Google’s own AI products

- Expand access to enterprise customers

- Avoid creating a closed ecosystem

“There are benefits to keeping TPUs internal,” said Google executive Amin Vahdat. “But that risks becoming a tech island.”

What comes next

As AI adoption accelerates, the competition between Google and Nvidia is entering a new phase.

Training may have defined the first wave of AI.

Inference—faster, cheaper, and everywhere—will define the next.

And in that race, Google is making it clear: it intends to be more than just a software leader.

Author: Staff Writer | Edited for WTFwire.com | SOURCE: Bloomberg.com

: 11